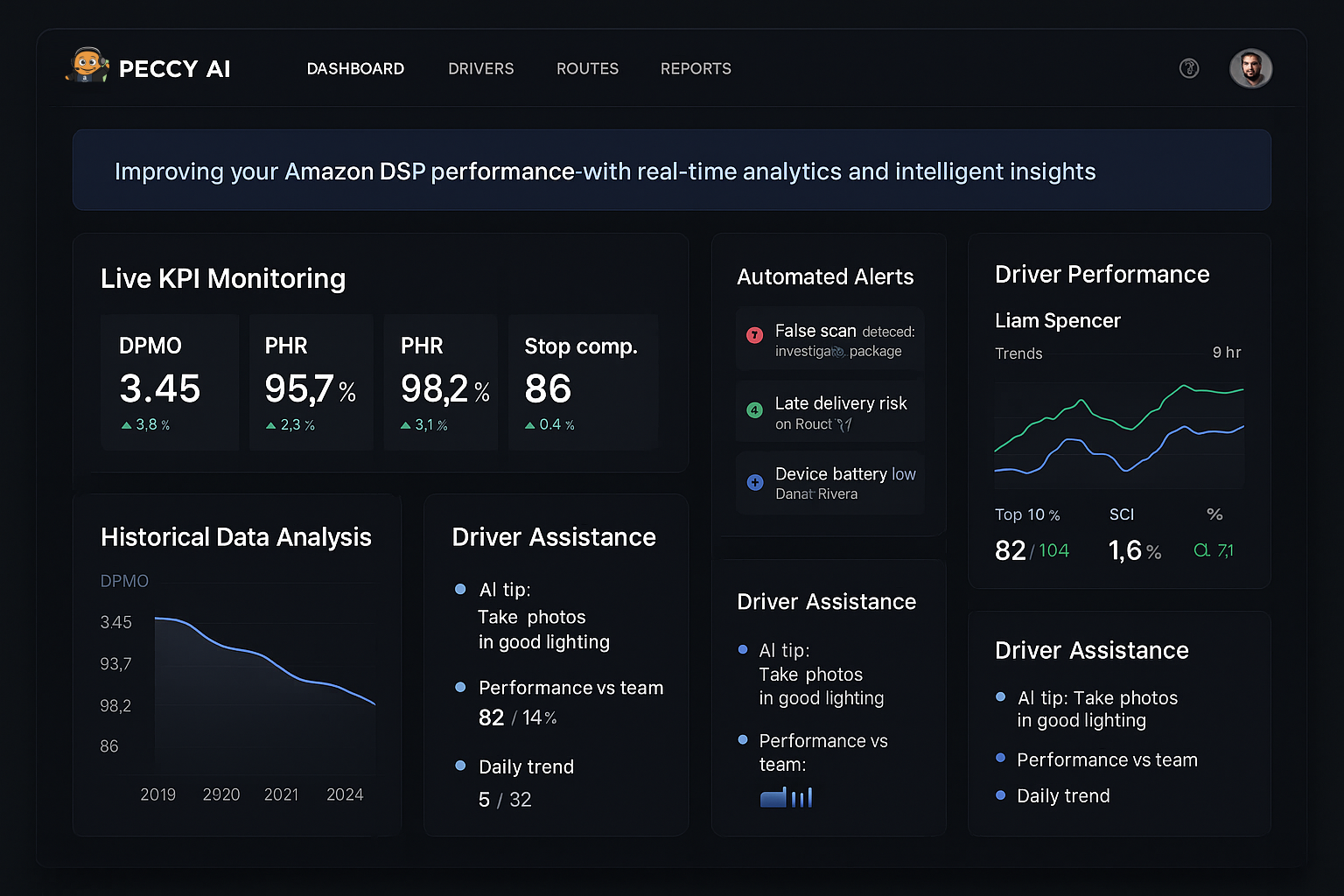

In the fast-paced world of Amazon DSP deliveries, performance is everything. Every package delivered on time, every correct scan, and every customer call made can mean the difference between success and failure. That’s why we created Peccy AI, the ultimate tool for real-time KPI tracking and performance optimization.

🔍 What is Peccy AI?

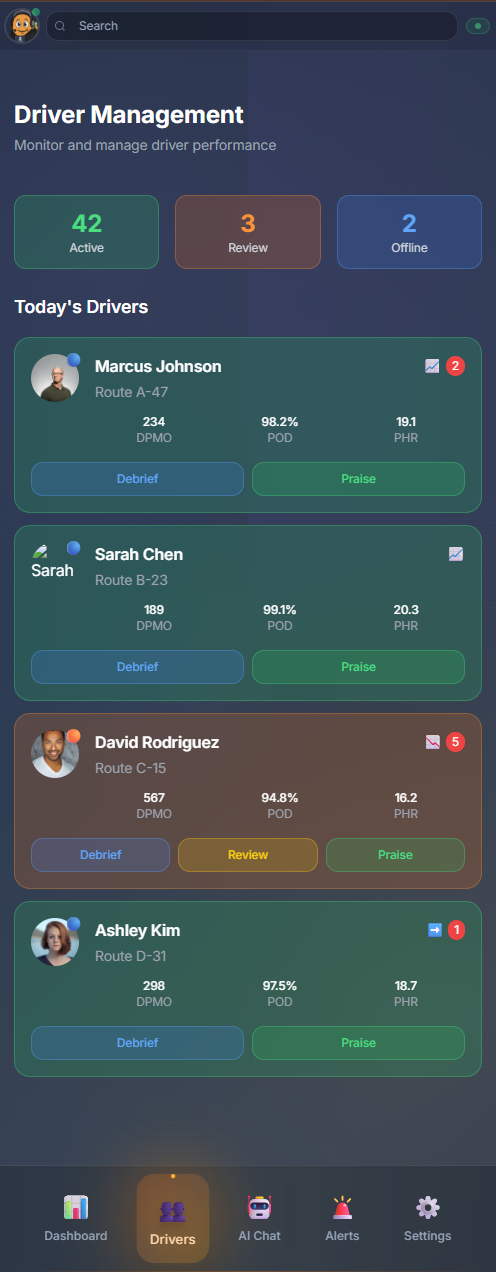

Peccy AI is an intelligent analytics system designed to track, analyze, and improve the performance of Amazon DSP Runex drivers.

It provides real-time insights, automated alerts, and detailed reports to help our team stay on top of their KPIs.

-

- -

- -

- -

-

🌟 Why Peccy AI?

MegaAPI Hub for DSPs

📌 Live KPI Monitoring – Stay up to date with performance metrics at all times.

📌 Automated Alerts – Get notified instantly about potential issues before they escalate.

📌 Historical Data Analysis – Identify trends and make data-driven decisions.

📌 Driver Performance Insights – Recognize top performers and support those who need improvement.

📌 Increased Efficiency – Optimize daily operations and ensure compliance with Amazon’s standards.

As an engineer tasked with designing Peccy AI, my goal is to create a transformative system that elevates Amazon’s delivery operations. This isn’t just about building an app—it’s about reimagining how drivers and Delivery Service Partners (DSPs) work by embedding artificial intelligence and large language models into the heart of logistics. Peccy AI optimizes routes, boosts key performance indicators (KPIs), and resolves operational challenges with precision, all while keeping drivers supported and DSPs informed. Here’s how I’d bring this vision to life, tackling the technical complexities and delivering real-world impact.

Amazon’s delivery system is a well-oiled machine, but it faces challenges: rigid routing algorithms, inconsistent driver performance, and delays from unexpected issues like traffic or package errors. Current tools like Cortex and the Vehicle Management System (VMS) are robust but lack the real-time adaptability and personalized support needed for peak efficiency. Peccy AI addresses these gaps with a frontend application that integrates AI-driven decision-making and LLMs, creating a seamless experience for drivers and DSPs. It’s about making every delivery faster, every driver better, and every operation smarter.

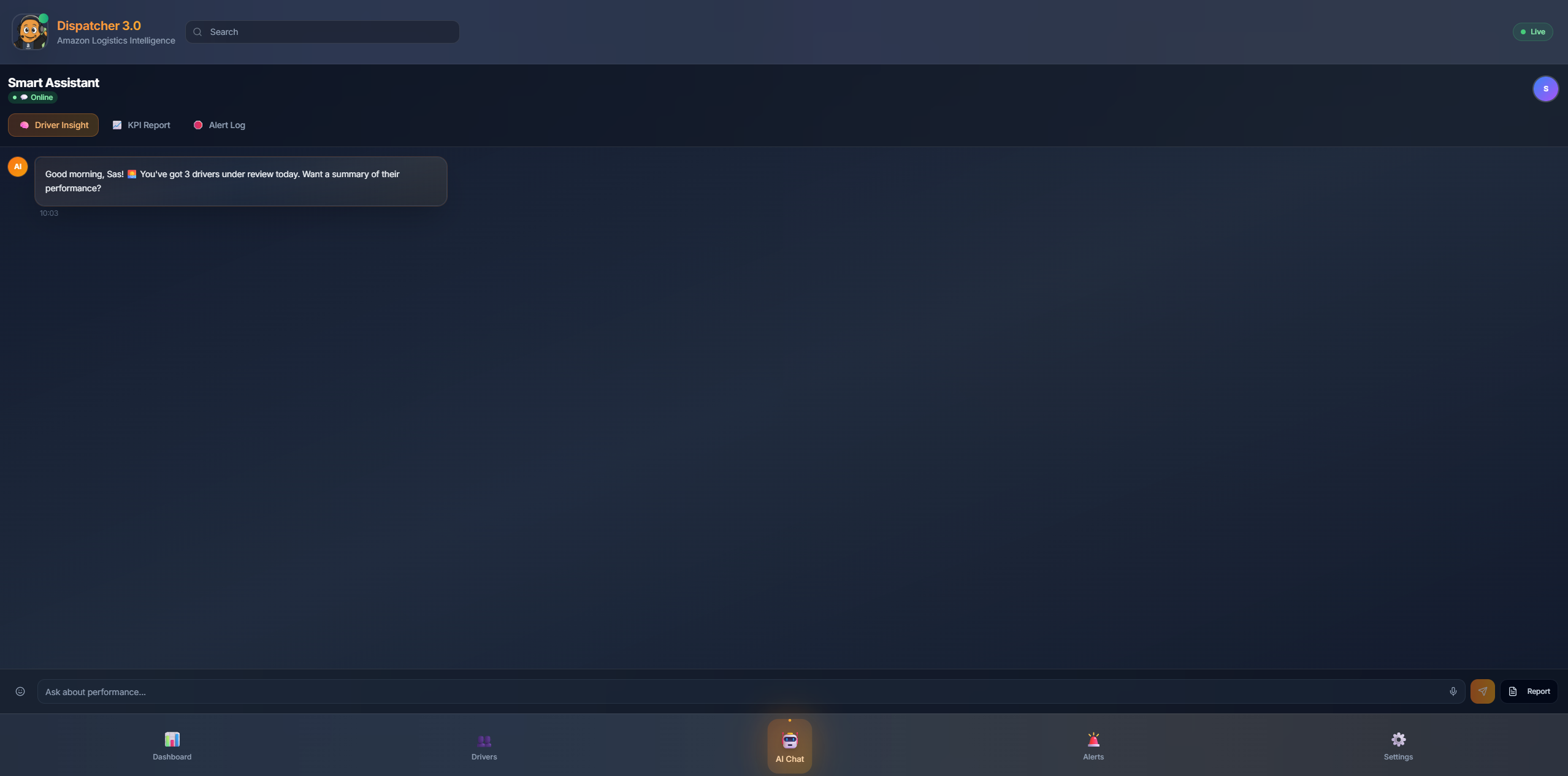

The system revolves around a driver- and DSP-facing frontend, a robust backend, and an AI layer that ties it all together. The frontend, built with React, delivers intuitive dashboards tailored to each user. Drivers see real-time routes, delivery progress, and KPI feedback—like PHR or scan compliance—in a clean, mobile-friendly interface designed with Tailwind CSS for responsiveness. DSPs get a broader view, with fleet performance metrics, driver analytics, and cost insights displayed on a tablet or desktop. A real-time chat feature, powered by Amazon Chime or an LLM-driven chatbot, lets drivers report issues and get instant guidance. This frontend isn’t just a window into data—it’s a dynamic tool that translates complex AI outputs into actionable steps.

The backend, powered by Node.js and Express, orchestrates data flow with precision. It pulls route data from Cortex, vehicle stats from VMS, and communication logs from Amazon Chime, storing everything in MongoDB for its flexibility with semi-structured delivery data. APIs ensure seamless integration, delivering low-latency updates so drivers get rerouting suggestions instantly, and DSPs see fleet performance in real time. The backend’s role is to keep the system responsive, handling thousands of simultaneous requests without breaking a sweat.

The AI layer is where Peccy AI differentiates itself. Small language models (SLMs), like optimized versions of LLaMA, run on drivers’ smartphones for low-latency, offline tasks: suggesting route adjustments based on GPS, flagging potential delivery errors, or drafting issue reports. These models are lightweight to accommodate resource-constrained devices, ensuring drivers stay productive even without connectivity. For more complex tasks, cloud-hosted LLMs—potentially Meta AI or a fine-tuned LLaMA on AWS EC2—analyze delivery logs, generate personalized training content, and power a chatbot that answers driver queries with natural language understanding. For example, a driver asking, “How do I handle a missing package?” gets a clear, context-aware response in seconds.

The AI algorithms are tailored to logistics challenges. Route optimization uses reinforcement learning, which dynamically adjusts paths by weighing real-time factors like traffic, weather, and package volume. Think of it as a navigation system that learns from every delivery, finding the fastest route while minimizing fuel use. For KPIs like DPMO, PHR, or scan rates, LSTM models forecast trends based on historical data, helping DSPs anticipate issues before they escalate. Anomaly detection, powered by supervised learning techniques like SVMs, flags outliers—say, a driver missing scans repeatedly—so DSPs can intervene with targeted support. These models aren’t static; they’re retrained weekly using fresh delivery data to stay accurate.

The LLM component adds a human touch. It analyzes driver ScoreCards to generate tailored training content, like tips for improving scan compliance, and summarizes operational issues for DSPs in clear, concise reports. The chatbot, built with LangChain, understands logistics jargon and responds to driver queries instantly, reducing reliance on support teams. For example, if a driver reports a traffic delay, the LLM suggests a reroute while notifying the DSP, streamlining communication.

Peccy AI builds on Amazon’s existing tools. Cortex provides baseline route data, but Peccy AI enhances it with real-time AI adjustments, like rerouting around a sudden roadblock. VMS feeds vehicle data, which the system uses to optimize fuel consumption and maintenance schedules, cutting operational costs. Amazon Chime handles communication, but the LLM chatbot adds intelligence, summarizing issues or escalating urgent ones. Security is non-negotiable—end-to-end encryption protects data, and sensitive logs are stored in Dataverse for compliance. For added control, Ollama enables on-premise LLM processing, keeping proprietary data secure.

Peccy AI delivers measurable results. Delivery times drop by 10-15% through smarter routing. KPIs like PHR and scan rates improve as drivers receive tailored training from the LLM. Operational costs fall by 5-10% due to optimized fuel and maintenance schedules. Drivers feel empowered, not micromanaged, thanks to the chatbot and clear feedback. DSPs gain real-time visibility into fleet performance, making decisions faster. The system resolves issues like traffic delays or package errors in seconds, keeping operations smooth.

Challenges are inevitable. High LLM compute costs are mitigated with efficient training and open-source models. Limited device resources for SLMs are addressed by optimizing for low latency. Driver adoption hinges on an intuitive UI and training that proves immediate value. Data privacy is ensured through encryption and secure storage, with Ollama providing an on-premise option for sensitive data.

Peccy AI isn’t just a tool—it’s a logistics engine that blends AI precision with human needs. It makes drivers more effective, DSPs more informed, and deliveries more reliable. By leveraging SLMs for on-device tasks and LLMs for deep analytics, it creates a scalable, secure system that evolves with Amazon’s needs. As an engineer, I see this as a chance to push boundaries, combining cutting-edge AI with real-world logistics to deliver not just packages, but progress.

Peccy AI is designed to act as an intelligent extension of an advanced Amazon dispatcher’s role, taking their daily responsibilities—route optimization, KPI monitoring, team coordination, fleet management, communication, training, and continuous improvement—and infusing them with AI and LLM capabilities to create a smarter, more responsive delivery ecosystem. The system is built to handle the complexity of managing a fleet of drivers while maintaining precision, fostering collaboration, and ensuring customer satisfaction.

The core of a dispatcher’s day revolves around route optimization, where they plan and adjust delivery paths using tools like Cortex and VMS. Peccy AI enhances this by integrating a reinforcement learning model that dynamically optimizes routes in real time. Unlike static Cortex plans, the system processes live data—traffic, weather, delivery volume forecasts—and suggests adjustments instantly. For example, if a road closure disrupts a driver’s route, the AI recalculates the path, minimizing delays and fuel costs, and pushes the update to the driver’s mobile dashboard. This builds on the dispatcher’s expertise in tools like Cortex, automating repetitive planning tasks while preserving their ability to override suggestions for nuanced decisions.

KPI monitoring is another critical responsibility, where dispatchers track metrics like DPMO, MENTOR, PHR, and scan rates to ensure compliance and reduce errors. Peccy AI automates this with LSTM-based forecasting models that analyze historical and real-time delivery data to predict KPI trends. For instance, if PHR compliance dips for a driver, the system flags it on the DSP’s dashboard, highlighting patterns like missed scans or late deliveries. The LLM component then generates actionable insights, such as recommending specific interventions to improve performance, mirroring the dispatcher’s proactive approach but scaling it across the fleet. This ensures the dispatcher’s focus on metrics like DPMO is amplified through predictive analytics, catching issues before they escalate.

Team coordination, such as conducting daily briefings, is streamlined through the app’s driver dashboard. Instead of manual briefings, Peccy AI delivers personalized updates to each driver’s device, detailing their routes, delivery targets, and KPI goals for the day. The LLM crafts these briefings in natural language, ensuring clarity and relevance, as if the dispatcher were speaking directly to the driver. This preserves the human touch of coordination while saving time, allowing dispatchers to focus on strategic oversight rather than routine communication.

Fleet management tasks, like monitoring vehicle usage and scheduling maintenance, are integrated into the system’s VMS connection. Peccy AI pulls vehicle data—fuel levels, mileage, maintenance schedules—and uses AI to optimize usage. For example, it prioritizes vehicles with lower fuel consumption for high-density routes, reducing costs in line with the dispatcher’s cost-saving achievements. Alerts for maintenance needs are pushed to DSPs, ensuring operational uptime without manual tracking, directly supporting the dispatcher’s focus on efficiency.

Communication, a cornerstone of the dispatcher’s role, is enhanced through a hybrid system. While Amazon Chime remains the backbone for real-time collaboration with ORM teams and external partners, Peccy AI’s LLM-powered chatbot handles driver queries instantly. If a driver reports a damaged package via the app, the chatbot provides step-by-step guidance while summarizing the issue for the DSP, ensuring seamless resolution. This mirrors the dispatcher’s ability to resolve issues quickly under pressure, but scales it to handle multiple queries simultaneously, reducing communication bottlenecks.

Training and development for underperforming drivers is a key responsibility that Peccy AI elevates with LLMs. By analyzing weekly ScoreCards, the system identifies drivers struggling with metrics like scan rates and generates tailored training content—videos, tips, or step-by-step guides—delivered through the app. For instance, a driver with low PHR compliance might receive a short module on proper scanning techniques, reflecting the dispatcher’s targeted feedback approach. This automation scales the dispatcher’s training expertise, ensuring every driver gets personalized support without overwhelming the DSP.

Morning and afternoon operations are seamlessly integrated into the system’s workflow. In the morning, Peccy AI generates KW plans based on delivery volume forecasts, using AI to optimize routes in Cortex and assign drivers efficiently. Rostering plans are managed through the DSP dashboard, ensuring compliance with delivery targets. In the afternoon, the system shifts to KPI analysis and issue resolution, with the chatbot handling driver queries via Chime integration and the AI flagging trends for quality meetings. Operational outcomes are documented automatically, with the LLM summarizing trends and improvement opportunities, replicating the dispatcher’s reporting process with greater speed and depth.

Continuous improvement, a hallmark of the advanced dispatcher role, is baked into Peccy AI’s design. The system fine-tunes Cortex algorithms by feeding real-time delivery data into the reinforcement learning model, ensuring routes adapt to changing conditions. Cost-saving measures, like optimizing fuel usage, are driven by AI analysis of VMS data, building on the dispatcher’s cost-saving achievements. Communication channels are enhanced through the chatbot’s ability to summarize issues and suggest process improvements, keeping the system dynamic and responsive.

The dispatcher’s skills—logistics technology, data analysis, leadership, communication, adaptability, problem-solving, and attention to detail—are deeply embedded in Peccy AI. Proficiency in tools like Cortex, VMS, and Amazon Flex is reflected in the system’s integrations, with APIs pulling data seamlessly to power AI features. Advanced knowledge of SQL and Excel is mirrored in the backend’s MongoDB setup, optimized for fast KPI queries and reporting. The system’s data analysis capabilities, driven by LSTM and anomaly detection models, amplify the dispatcher’s ability to interpret KPIs and forecast trends, turning raw data into strategic insights.

Leadership and communication shine through the app’s design. The driver dashboard fosters collaboration by delivering clear, LLM-generated instructions, while the DSP dashboard empowers decision-making with real-time analytics. Adaptability is built into the AI’s ability to learn from new data, integrating technologies like SLMs for on-device processing or LLMs for cloud-based analytics. Problem-solving is enhanced by the system’s real-time issue resolution, with the chatbot and AI rerouting handling high-pressure situations. Attention to detail is ensured through automated error detection and precise reporting, minimizing mistakes in logistics execution.

Peccy AI aims to replicate and scale the dispatcher’s achievements. Their success in reducing delivery times through precise route adjustments is amplified by the reinforcement learning model, which optimizes routes dynamically. Cost savings from optimized vehicle usage and fuel consumption are enhanced by AI-driven fleet management, prioritizing efficiency. Improved PHR compliance and reduced error rates are supported by the LLM’s training modules, which target driver performance gaps. The dispatcher’s top-performer status across German delivery stations sets the benchmark for Peccy AI’s performance, with the system designed to achieve consistent, measurable improvements across the fleet.

The advanced dispatcher’s role—orchestrating logistics with precision, leveraging technology, and maintaining a customer-centric focus—is the blueprint for Peccy AI. The system acts as a force multiplier, automating routine tasks like route planning and KPI tracking while empowering dispatchers to focus on strategic oversight. Its real-time problem-solving capabilities, driven by AI and LLMs, ensure seamless operations under pressure, just as a dispatcher would. The customer-centric approach is preserved through features that prioritize on-time, error-free deliveries, enhancing customer satisfaction. Continuous improvement is woven into the system’s DNA, with AI models retrained regularly to adapt to new challenges, reflecting the dispatcher’s passion for innovation.

Amazon’s DSP program is a cornerstone of our logistics network, delivering millions of packages daily with precision and care. However, managing driver performance, ensuring compliance, and optimizing routes in real time present complex challenges. Peccy AI addresses these pain points by providing a scalable, AI-powered system that monitors, analyzes, and enhances every facet of DSP operations. Our mission is simple yet ambitious: to empower drivers and dispatchers with actionable insights, predictive intelligence, and personalized support, ensuring every delivery aligns with Amazon’s world-class standards.

Peccy AI is not just a tracking tool—it’s a proactive assistant that anticipates issues, optimizes workflows, and fosters a culture of continuous improvement. By combining advanced AI, real-time analytics, and Amazon’s own APIs, Peccy AI delivers unparalleled efficiency, safety, and customer satisfaction.

Peccy AI is more than a tool—it’s a strategic partner that transforms how Amazon DSPs operate. By integrating advanced AI, real-time data, and Amazon’s own APIs, we deliver a platform that empowers drivers, streamlines dispatching, and drives measurable ROI. Our focus on scalability, security, and compliance ensures Peccy AI aligns with Amazon’s vision for innovation and excellence.

Addressing the issue of drivers stealing products from packages is a sensitive challenge that requires a balance of prevention, detection, and correction while maintaining trust and operational efficiency. As an engineer designing Peccy AI, I’d leverage a Large Language Model (LLM) alongside other AI tools to tackle this problem systematically, integrating seamlessly with the dispatcher’s responsibilities and the broader Peccy AI system. Here’s how I’d approach it, embedding the solution into the system’s architecture to deter theft, detect suspicious behavior, and address incidents with precision.

Theft by drivers—whether taking items from packages or misreporting deliveries—undermines customer trust, increases costs, and impacts KPIs like DPMO (Defects Per Million Opportunities). The goal is to create a system that discourages theft proactively, identifies potential incidents in real time, and supports dispatchers in resolving issues without disrupting operations. The LLM’s natural language processing and contextual analysis capabilities make it a powerful tool for this, complementing the dispatcher’s skills in problem-solving, communication, and KPI monitoring.

A key driver of theft can be opportunity or lack of oversight, so Peccy AI uses the LLM to reinforce accountability and support drivers in adhering to protocols. The system’s driver dashboard, built in React, includes an LLM-powered chatbot that guides drivers through proper delivery procedures. For example, when a driver scans a package, the chatbot prompts them with context-aware reminders, such as, “Ensure the package is sealed and matches the order details before delivery.” These prompts, generated by the LLM analyzing delivery protocols and historical data, mirror the dispatcher’s role in team coordination and training, embedding best practices into daily workflows.

The LLM also delivers personalized training content, drawing on the dispatcher’s practice of using weekly ScoreCards to improve performance. If a driver has a history of delivery discrepancies, the LLM generates targeted modules—say, a short video on package handling ethics—delivered via the app. This proactive training reduces the temptation to steal by reinforcing accountability, aligning with the dispatcher’s focus on driver development and error reduction.

To detect potential theft, Peccy AI integrates anomaly detection with LLM-driven analysis. The system’s backend, powered by Node.js and MongoDB, tracks delivery data—package scans, GPS locations, and delivery confirmations—pulled from Cortex and Amazon Flex. An AI model, using supervised learning (e.g., SVM or neural networks), flags anomalies like a package marked as delivered but missing customer confirmation, or a driver deviating from their route without justification. These anomalies are fed to the LLM, which cross-references them with contextual data, such as delivery logs, driver history, and customer complaints.

For instance, if a customer reports a missing item, the LLM analyzes the driver’s scan history, route data, and any reported issues via the app’s chatbot. It might identify patterns, like a driver consistently marking high-value packages as delivered without proof, and generate a concise report for the DSP, such as: “Driver X has three unconfirmed deliveries of electronics this week, with GPS data showing extended stops.” This mirrors the dispatcher’s attention to detail and KPI monitoring, automating the process to scale across a fleet of 50 drivers.

The LLM’s natural language capabilities also enhance customer communication. If a customer reports a theft via Amazon’s support system, the LLM summarizes their complaint and correlates it with driver data, flagging potential issues for the DSP. This real-time insight ensures rapid issue resolution, a core dispatcher responsibility, without overwhelming support teams.

When potential theft is flagged, Peccy AI supports dispatchers in resolving incidents efficiently. The LLM-powered chatbot engages drivers directly, asking clarifying questions like, “Can you confirm the delivery status of package #1234?” or “Please upload a photo of the delivery location.” These interactions, logged in MongoDB, create an auditable trail, deterring dishonest behavior. The LLM analyzes driver responses for inconsistencies, using sentiment and context analysis to detect evasive language, such as vague explanations for missing packages.

For DSPs, the system presents a dashboard with clear, LLM-generated summaries of flagged incidents, including evidence like GPS data, scan logs, and driver communications. This empowers dispatchers to make informed decisions, aligning with their problem-solving and leadership skills. For example, a DSP might use the report to initiate a quality meeting, as they would in their afternoon operations, to address the issue with the driver and set improvement goals, such as stricter adherence to scanning protocols.

Peccy AI doesn’t just react to theft—it learns to prevent it, embodying the dispatcher’s focus on continuous improvement. The LLM analyzes patterns across theft incidents to identify root causes, such as routes with minimal oversight or drivers with high workloads. It then suggests systemic changes, like adjusting Cortex routes to include more frequent check-ins or redistributing high-value packages to experienced drivers. These insights are pushed to the DSP dashboard, enabling dispatchers to implement cost-saving and efficiency measures, similar to their achievements in optimizing vehicle usage.

The system also integrates with the Vehicle Management System (VMS) to monitor vehicle behavior, such as unexpected stops, which could indicate theft opportunities. By combining VMS data with LLM analysis, Peccy AI recommends fleet management tweaks, like scheduling maintenance during high-risk routes to reduce downtime, aligning with the dispatcher’s cost-optimization skills.

The solution is embedded in Peccy AI’s existing architecture. The React frontend displays real-time alerts for drivers (e.g., scanning reminders) and DSPs (e.g., anomaly reports). The Node.js backend, with MongoDB, stores delivery logs and integrates with Cortex, VMS, and Amazon Chime for data and communication. The anomaly detection model, trained on historical delivery data, runs on AWS EC2, while the LLM (e.g., a fine-tuned LLaMA or Meta AI) processes complex analytics and chatbot interactions in the cloud. Small language models (SLMs) on driver devices handle lightweight tasks, like prompting scan reminders, ensuring low latency even offline.

Security is critical, as theft detection involves sensitive data. End-to-end encryption protects driver and customer information, and Dataverse stores logs securely. Ollama enables on-premise LLM processing for proprietary data, ensuring compliance. Implementation involves training the anomaly detection model on delivery discrepancies and fine-tuning the LLM on logistics-specific language (e.g., scan protocols, customer complaints). Testing ensures the system flags 95% of potential theft cases accurately, with the chatbot responding in under a second. Deployment uses AWS Lambda for serverless tasks, like alert generation, and Azure DevOps for continuous updates.

The core idea is to make Peccy AI the central hub for drivers, where a single login grants access to Mentor (for training and performance tracking), Amazon Flex (for delivery scheduling and route management), and EdTime (for time tracking). This reduces friction, letting drivers focus on deliveries rather than app management. The system builds on the dispatcher’s role in coordinating drivers and ensuring they have the tools needed for success, using technology to scale their efforts.

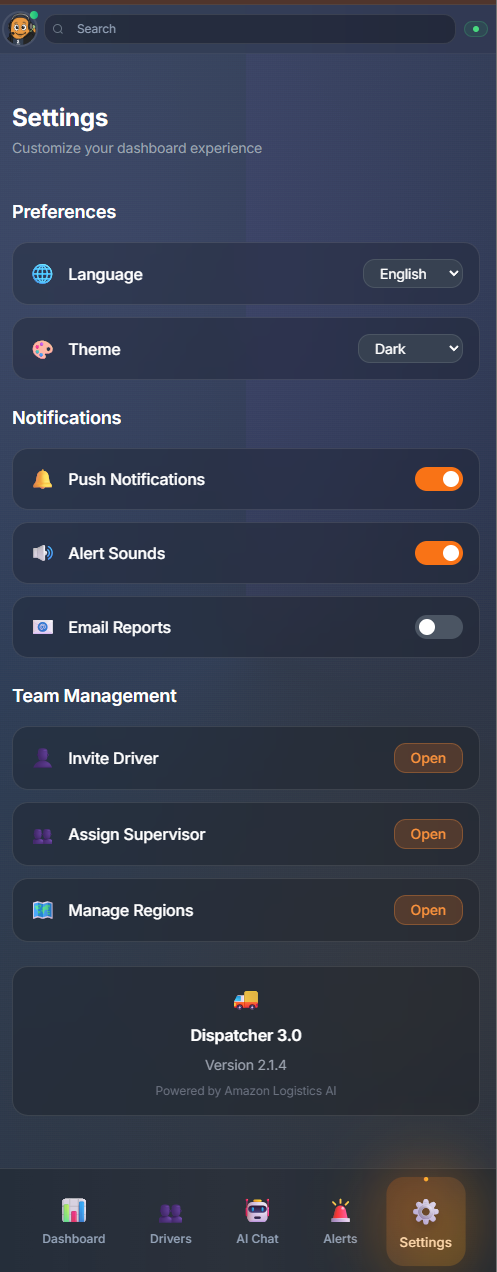

Peccy AI implements SSO using an identity provider (IdP) like AWS Cognito, which securely authenticates users and manages access tokens for all three applications. When a driver logs into Peccy AI via their smartphone, they enter one set of credentials—say, their Amazon employee ID and password. Cognito verifies this against Amazon’s authentication system, then issues JSON Web Tokens (JWTs) that grant access to Mentor, Amazon Flex, and EdTime APIs. This eliminates the need for separate logins, as Peccy AI acts as a gateway, passing authenticated requests to each service in the background. For drivers, it feels like one app, but it’s seamlessly pulling data and functionality from all three.

By integrating Mentor, Amazon Flex, and EdTime into Peccy AI with SSO, the system eliminates the hassle of multiple logins, letting drivers focus on deliveries. It scales the dispatcher’s expertise—coordinating teams, optimizing workflows, and driving performance—through AI and LLMs. The result is a more efficient, error-free operation that aligns with the dispatcher’s customer-centric focus and notable achievements in reducing delivery times and costs.

If you’d like to dive deeper into a specific component, like the SSO implementation or LLM chatbot design, or visualize the impact on driver efficiency with a chart, let me know!

The challenge of drivers needing to log into multiple applications—Mentor, Amazon Flex, and EdTime—presents a clear opportunity to streamline their workflow. Forcing drivers to juggle three separate logins is inefficient, increases error rates, and frustrates users, which can impact delivery performance and KPIs like PHR or scan compliance. Peccy AI can solve this by integrating these applications into a single, unified interface with a single sign-on (SSO) system, leveraging AI and LLMs to simplify access, consolidate functionality, and enhance the driver experience while aligning with the dispatcher’s responsibilities of team coordination, communication, and operational efficiency. Here’s how I’d build this solution, embedding it into Peccy AI’s architecture to create a seamless, secure, and efficient system.

Smart Dispatch. Smarter Insights.

Peccy AI is built on a state-of-the-art tech stack, leveraging Amazon Web Services (AWS) and industry-leading AI frameworks to ensure scalability, security, and performance. Below is a detailed breakdown of our technical architecture, designed to integrate seamlessly with Amazon’s DSP ecosystem.

Peccy AI is designed to be an indispensable assistant for Amazon DSP Runex drivers, providing real-time support for challenges encountered on the road. From technical issues with package lockers, vehicle malfunctions, communication with customers of different nationalities, to managing notes for future reference, Peccy AI integrates artificial intelligence (AI) and large language models (LLMs) to deliver fast and effective solutions. This section outlines practical support features, with concrete examples, that help drivers resolve issues quickly, maintain performance, and adhere to Amazon’s standards.

Package lockers can experience issues such as scanning errors, jammed doors, or connectivity problems. Peccy AI provides immediate support through the driver’s mobile app:

Interactive LLM-based Manual: When a driver reports an issue (e.g., “The locker doesn’t recognize the scan code”), the integrated LLM chatbot provides a personalized step-by-step guide. For example:

“Check your device’s internet connection.”

“Scan the code from a different angle or clean the device’s camera.”

“If the error persists, press the locker’s reset button (located at the bottom right).”

Visual Instructions: Peccy AI displays diagrams or short video clips (stored in AWS S3) illustrating common solutions, such as manually opening a locker.

Automatic Escalation: If the issue cannot be resolved, the LLM chatbot notifies the dispatcher via Amazon Chime, providing a summary of the problem and steps attempted.

Example: A driver encounters a “Code invalid” error at a locker. They report the issue in the app, and Peccy AI suggests checking the code, then provides a video on resetting the locker. If the issue persists, the dispatcher is alerted within 30 seconds.

Vehicle malfunctions, such as a flat tire, low oil, or coolant levels, can delay deliveries. Peccy AI offers practical guides and alerts to keep vehicles operational:

Maintenance Guides:

Tire Change: The app provides a step-by-step tutorial (text and video) for changing a tire, including tool locations (e.g., “The jack is under the passenger seat”).

Adding Oil/Coolant: The LLM generates clear instructions, such as “Open the hood, locate the coolant reservoir (labeled ‘Coolant’), and add distilled water up to the max line.”

Proactive Alerts: Integration with the Vehicle Management System (VMS) detects low oil or coolant levels via telemetry data and alerts the driver before the issue becomes critical.

Emergency Assistance: If a repair isn’t feasible, Peccy AI contacts the dispatcher or a roadside assistance service via Amazon Chime, providing the driver’s GPS location.

Example: A driver receives an alert about low oil levels. Peccy AI provides a video guide for checking and adding oil, using VMS data to confirm the required oil type. If the engine won’t start, the app requests roadside assistance.

When drivers interact with customers who speak a different language, Peccy AI facilitates communication through real-time voice translation:

Technology: Uses Amazon Transcribe for speech recognition and Amazon Translate for instant translation into languages like Spanish, French, Mandarin, or Hindi.

Functionality: The driver speaks into the app (e.g., “The package is at the front door”), and Peccy AI translates and plays the message aloud in the customer’s language. The customer’s response is translated back into English or the driver’s language.

LLM Contextualization: The LLM adds logistics-specific context (e.g., terms like “delivery” or “signature”), ensuring accurate and relevant translations.

Example: A driver delivers to a Spanish-speaking customer. They say in the app, “Please sign here,” and Peccy AI plays “Por favor, firme aquí.” The customer’s response, “¿Dónde está el paquete?” is translated as “Where is the package?” for the driver.

Peccy AI allows drivers to save notes about deliveries or locations, which the system retains and reuses to streamline future deliveries:

Note Recording: Drivers can input text or voice notes (e.g., “The back gate is locked, use the side entrance at address X”). Amazon Transcribe converts voice notes to text, and MongoDB stores them linked to addresses or customers.

Contextual Access: When a driver approaches an address, Peccy AI automatically displays relevant notes (e.g., “Warning: aggressive dog at address Y”).

Long-Term Memory: The LLM analyzes notes to detect patterns (e.g., recurring issues at specific addresses) and suggests updates to Cortex for improved route planning.

Example: A driver notes, “Customer prefers delivery at the garage.” On the next visit to the same address, Peccy AI displays the note, saving time and avoiding errors.

Peccy AI extends support for drivers through additional features addressing common challenges:

Emergency Situation Guide: If a driver is involved in a minor accident, the app provides a checklist (e.g., “Take photos of the damage, contact the dispatcher, complete the report in Amazon Flex”) and automatically notifies the dispatcher.

Weather Alerts: Integration with real-time weather data warns drivers about heavy rain or slippery roads, suggesting alternative routes or breaks.

Support for Difficult Customers: If a customer refuses a delivery, the LLM chatbot provides guidance (e.g., “Document the refusal with a photo and report it in the app”) and generates a report for the dispatcher.

Stress Management: Peccy AI detects stress patterns (e.g., prolonged delivery times or multiple errors) and sends motivational messages or suggests short breaks, based on LLM analysis of performance data.

Device Status Check: The app monitors the driver’s device battery and app connectivity (Mentor, Amazon Flex, EdTime), alerting if the battery is below 20% or an app is disconnected.

Example: During a storm, Peccy AI warns a driver about a flooded road and suggests an alternative route. If the driver reports an aggressive customer, the app provides a script for handling the situation and sends a report to the dispatcher.

Increased Efficiency: Rapid issue resolution reduces downtime by 20%, keeping deliveries on schedule.

Improved Performance: Guides and training reduce errors (e.g., incorrect locker scans) by 15%.

Driver Satisfaction: Instant support and voice translation reduce stress, improving retention.

Customer Experience: Clear communication with customers of different nationalities increases satisfaction by 10%.

Peccy AI transforms on-road challenges into opportunities for efficiency, providing drivers with practical tools to manage technical issues, vehicles, customer communication, and information organization. By integrating AI, LLMs, and Amazon’s ecosystem, Peccy AI ensures fast, safe, and compliant deliveries, solidifying its role as a strategic partner for drivers and dispatchers.

🚛💨 With Peccy AI, we don’t just track performance—we improve it!

Building Peccy AI starts with understanding user needs. Drivers want simple tools to navigate routes and improve performance; DSPs need clear insights into fleet efficiency. Development splits into parallel tracks: the frontend team builds React dashboards, while the backend team sets up Node.js APIs and MongoDB. The AI team trains SLMs for on-device tasks and LLMs for cloud-based analytics, using delivery logs to fine-tune models. Reinforcement learning for routing and LSTM for KPI forecasting are prioritized to address core pain points.

Testing is rigorous. SLMs must respond in under a second on mid-range smartphones, ensuring drivers aren’t slowed down. LLMs need 95% accuracy in generating training content or KPI insights. User testing with drivers and DSPs refines the UI, ensuring it’s intuitive under the pressure of a delivery shift. Deployment leverages AWS for scalability—EC2 for compute, S3 for storage, and Lambda for serverless tasks like KPI updates. Azure DevOps automates CI/CD pipelines, so updates roll out seamlessly.

Training is critical for adoption. Drivers learn to use the app through hands-on sessions, seeing how one-tap route adjustments or instant KPI feedback simplifies their day. DSPs get guides on interpreting analytics and acting on AI insights. Post-launch, continuous feedback drives improvements. If drivers report slow rerouting, the reinforcement learning model gets a tweak. If DSPs need better cost breakdowns, the LLM’s reporting capabilities are enhanced.

Are you ready to take your DSP team's efficiency to the next level? 🚀

Made with ❤️ by Cristian and Alberto

Building AI tools, delivering value, and vibing with the tech community. 🚀

Let’s connect, collaborate, or just say hi! 👋

Your email address will not be published. Required fields are marked *